Only polls conducted online correctly foretold that Brexit was a real possibility, and five other things we learned about opinion polling from the EU Referendum

- THE ONLINE POLLS WERE RIGHT (even though our last one wasn't)The online polls in this referendum, of which more than half were by YouGov, were the only piece of information informing the world of the correct risk of a Brexit throughout the campaign. The online polls showed the race very close, bouncing within the margin of error either side of 50/50, with a slight advantage for the Leave campaign. Unfortunately not enough attention was paid to this evidence, and the media, campaigns, betting and financial markets continued to presume that Brexit would not happen, despite warnings from YouGov again and again and again and again that this confidence was misplaced.

Three days before the vote, YouGov showed a two point lead for Leave. It was barely reported and didn’t move the markets. A subsequent eve of voting poll showed too close to call (51-49 Remain) and, unfortunately, our final on-the-day recontact study moved an additional percentage point in the wrong direction to 48/52 in favour of Remain. When this was announced on Sky News at 10pm, this time it was reported across the world as a confident prediction of a Remain victory. In reality small movements like this between different snapshot polls are an expected part of the process (we publish a margin of error of 3% on most polls, which we encourage media to make reference to), but overall our data showed the very close race that was eventually revealed.

Other online pollsters did better - congratulations to TNS and Opinium, the two online pollsters that recorded Leave ahead even at the end.

Every other source of information suggested that a victory for Remain was a done deal - only the online polls revealed the true state of the race. The real story of this campaign is that not enough attention was paid to good polls, not the reverse. - THE BETTING MARKETS KNOW NOTHINGIt became fashionable during the referendum campaign to observe the betting markets instead of opinion polls for reliable prediction. News websites included live tracking of betting odds on their pages and main news stories would often include references to the betting odds as well as any new polling information.

Throughout the campaign, the betting markets showed huge confidence in a Remain victory – odds on Brexit were often as long as 5:1, including on the day of the vote when at one point they hit 16:1.

Not only do the betting markets not have access to any special knowledge – there is no special knowledge that they could even theoretically have access to. Polls are the only primary evidence for how an election campaign is going, and the betting markets simply chart the consensus narrative which in this case, as so often, proved to be wrong. Even as the night wore on and it became increasingly clear that Leave had won the betting exchanges still had them as second favourites (as high as 3-1 at times). - ONLINE POLLS ARE MORE ACCURATE THAN PHONE POLLSFinally, this controversy can now be settled once and for all. Throughout the campaign the telephone polls showed Remain comfortably ahead, sometimes by as much as 18 points, narrowing to an average 2.7% Remain lead over the final four weeks. The online polls showed Leave ahead more often than Remain, with an average lead for Leave of 1.8% over the same period.

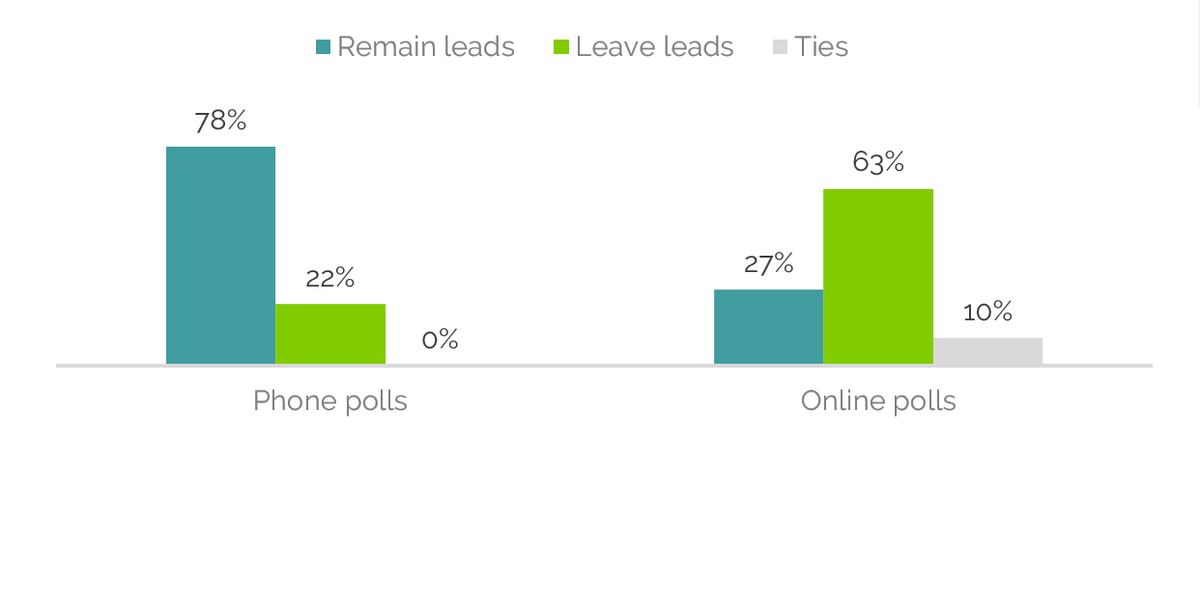

In total over the campaign period, 78% of the telephone polls showed a Remain lead, whilst 63% of the online polls showed Leave ahead, including 57% of YouGov polls.

It makes perfect sense – why would you answer a survey if a stranger calls you on the telephone? We know that as few as 5% of people do, and the number is getting smaller every day. Online polls are quick and convenient to take part in and you are paid to take part – that is why a more representative group of people is happy to answer them.

Half way through the campaign, YouGov analysis argued that phone polls were wrong and that online was accurately calling a leave lead. This was based on showing that level of education was the key to the election, as turned out to be the case. Phone polls struggle to reach the less educated in society and it was their over-representation of graduates that skewed them towards remain. Online polls by contrast were able to better represent the educational make-up of the UK.

Most importantly, because respondents to our polls are members of a panel of 600,000 people across the UK and we already know a lot about them, we can select people to make a representative sample in a way that telephone pollsters calling strangers never can. - BAD TELEPHONE POLLING MAY HAVE LOST REMAIN THE CAMPAIGNIt is possible to argue that the inaccurate data that the Remain campaign’s in-house pollster was providing caused Brexit to happen. They hired a former city trader to conduct an analysis of whether the telephone polls or the online polls were more reliable. The report, which now turns out to have been completely wrong, became extremely influential despite YouGov’s strong arguments at the time that it was based on flimsy evidence and circular logic. It was prominently featured on Newsnight and gave ballast to many commentatorswho wanted to believe that Remain were ahead.

One result of that report was an extraordinary method of ‘weighting by social attitude’ which essentially pushes a poll result to a previously known poll of social attitudes. As attitudes to the EU are social attitudes, it was a bizarre idea with no precedent in polling science (you might as well force-weight a sample to previously known EU Referendum attitudes). This method was used for the Remain campaign’s internal polling, and led to Populus’s day-of-voting poll showing a 55-45 victory for Remain (we don’t include it in our averages as we don’t consider it a proper poll).

It is entirely possible that if the Remain campaign had not been misled as to their margin of victory, they might have run a different, and successful, campaign. - TURNOUT NEEDS TO BE TREATED SEPARATELYThe element we know is hardest to predict in an election is turnout – what proportions of different groups actually turn out to vote. It will always be speculative because what people claim will never match their behaviour. However, there are historical trends, and it now seems that the YouGov Turnout predictor, which has been on the website throughout the campaign, was about right in suggesting an additional percentage point or two in favour of Leave thanks to differential turnout. The underlying poll level only needs to have been a 1% Leave lead (49.5/50.5) to become the 52/48 result we eventually saw.

We need to consider how best to represent turnout assumptions in future, which can make such a difference to the final result, but which are always more speculative than the underlying poll numbers. - POLLING WORKS, AND YOUGOV IS HIGHLY ACCURATEThere is only one major anomaly in YouGov’s otherwise excellent polling record, and that was the General Election in 2015. There we got the story badly wrong, and we have identified and corrected the things that led to that error.

Since then YouGov has accurately forecast Jeremy Corbyn's victory in the Labour leadership, Sadiq Khan's election as London mayor with perfect precision, and the Scottish and Welsh assembly results in May. It continues a history of accuracy that stretches back many years, not just better than our competitors but highly predictive of eventual outcomes.

In the EU referendum, our statistical model (led by Prof Doug Rivers and Dr Benjamin Lauderdale) performed very well and suggests that there was indeed a move to Remain in the final week, but that it simply wasn’t quite enough. We will be investing further in these techniques in the future as they help to smooth out the ‘noise’ of different samples and are better at revealing underlying trends. One of the lessons for us may be to place greater emphasis on models of this kind instead of snapshot polls which are subject to noise and sampling error.

For YouGov it will always sting that, having been on the ‘Brexit-y’ fringe of all the predictions throughout the EU Referendum campaign, and having insisted that the race was close right up until the end, our final poll number on the night of the vote moved a percentage point in the direction of Remain. This number attracted the most attention, in part because it confirmed what people were already expecting.

But overall we are proud that, in the face of complacency across the world media and markets, we were one of the few voices accurately assessing the possibility of Brexit as very real.

In turbulent times such as now, when parties are selecting leaders and politicians are trying enact the will of the people, understanding of public opinion plays a crucial role in making good decisions. It gives voters a seat at the table, even outside elections, and YouGov will continue to represent their views as best we can.